Jeff Blackburn, Regional VP of Sales at Dataspeed, was recently published in Transportation-as-a-Service (TAAS) Magazine. His article, “Simulation and By-Wire Vehicle Testing for Comprehensive Validation” discusses the two types of testing that must be used in combination to validate AV software under development. You can read the article below or in the TAAS Issue.

The Challenge

Autonomous vehicle (AV) verification is a never-ending engineering process that when well-executed, makes efficient use of limited resources to find and fix the greatest number of critical problems. The end goal is to address bugs, or undesired behaviors in the AV system stack, before they cause the vehicle to fail or crash. Simulation offers the promise of scaled testing at a low cost and with high speed. This mode of testing provides comprehensive verification of millions of tests to ensure adequate coverage of edge and corner cases. Physical by-wire vehicle testing, while slower, more difficult, and more expensive to scale offers one clear advantage: it does not involve any simplification or approximation of the real world. The two types of testing must be used in a coordinated and combined fashion to validate AV software under development.

Both simulation and physical by-wire vehicle testing are required to gain the necessary confidence that the various behavioral competencies embodied in the software, sensors, and vehicle dynamics are capable of safely permitting the vehicle to operate without human intervention or supervision. In short, the AV needs to perceive the world, accurately determine where it is in the world, plan and execute a strategy to move between points without collisions, and to recognize and deal with unexpected conditions and events.

Flexibility of Simulations

A simulation built to test AV software functionality can be conceptualized as input conditions, an operating mechanism or mechanisms, and output conditions. The input conditions would include the geometry of the static virtual scene such as the road network, road markings, buildings, vegetation, signs, and signals. It would also include information regarding the dynamic actors within a scene, their locations, speeds, paths, colors, etc. And lastly, it includes the data coming from the virtual sensors mounted on the virtual EGO vehicle. The mechanism is the operation of the software stack or function being tested. It is the means by which the simulation produces an output from the input conditions during the course of a run. Output conditions refer to the commands sent by the mechanism to the vehicle dynamics control resulting in a change in the EGO state due to steering, acceleration, or braking.

Simulation Limitations

A common criticism of simulation is that it can be used to “prove” anything and is thus of little or no scientific value. As the noted roboticist and MIT professor, Rodney Brooks, stated, “The problem with simulations is that they are doomed to succeed.” Or as the statistician, George Box, wrote in his book Robustness in Statistics, “All models (simulations) are wrong, but some are useful.” Therein lies the problem: how to ensure that the simulation captures the relevant aspects of the phenomenon being studied. Does it have the necessary correspondence and appropriate level of abstraction for the problem being simulated?

The simulation may fail if there is too small of a correspondence between the simulation’s structure and known aspects of reality. Correspondence can range from a nearly identical re-creation of physical phenomenon to much simpler models that only provide a binary yes or no signal. While it’s easy to create simple sensor models under ideal conditions, it’s critical that the output data is detailed and accurate enough so that the perception algorithm cannot distinguish between simulated and real data. Otherwise, algorithms that are developed in simulation with non-realistic data will generate inaccurate outputs when run on a real vehicle perceiving real-world data.

Consider a virtual radar sensor used in an AV simulation. The computer graphic textures or raytracing techniques used to develop a simple radar model may not necessarily match the results from real radar. Real radar reflections are strongly dependent on many factors such as the geometry and material of the intersected object, as well as the clutter and multipathing effects. These effects have been studied at length, and in theory a physics-based radar model can be created to account for them. However, these models are very difficult to build and extremely difficult to validate, particularly for those simulation tool suppliers that may not have staff with the requisite technical background and experience.

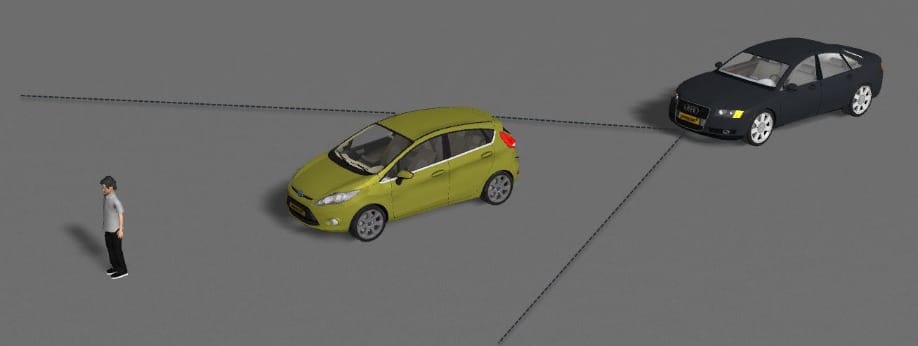

For example, in Figure 1, a weak signal return from the pedestrian is not received by the radar because the pedestrian is occluded by the vehicle. This phenomenon is well understood and is relatively easy to model in a virtual sensor.

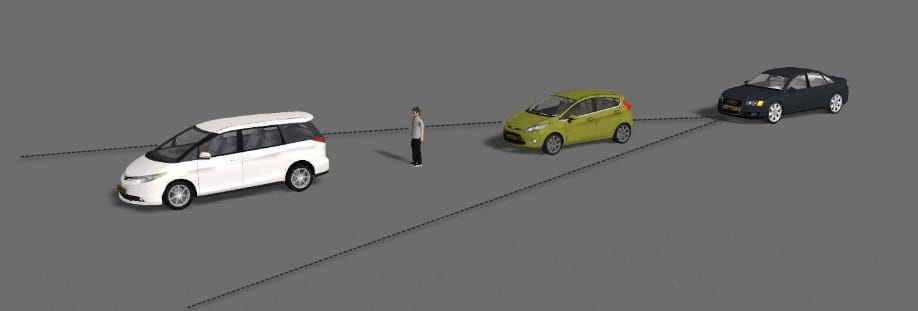

In Fig. 2, the transmitted signal is reflected back by the vehicle in front of the pedestrian and then propagates back to the receiver. In some published studies, the multi-path reflections in this scenario amplify the received signal, so that it appears to be much stronger, to the extent that the true positive rate is 4.5 times larger than when the vehicle in front is missing. This phenomenon is not as well understood, nor does it occur with the same consistency as or signal characteristics as the earlier occlusion example.

The question then is, how should we create a simulation model for the virtual radar given the above two scenarios? One model needs to account for both effects, as the only difference between the two physical scenarios is the presence of the leading car in example two. Ideally, the sensor model used in simulation should be created by the sensor supplier, as this is where the technical expertise resides. Unfortunately, this rarely happens as the sensor supplier likely lacks the technical expertise required to create virtual models suitable for use in simulation. They probably also lack a strong business case to support the time and cost required for this effort.

Instead the sensor model will be created by the simulation supplier, relying on published research, which may be obsolete or incomplete. Lacking the technical expertise in sensing, and the physical labs required to perform characterization tests, the simulation supplier can only assume that their sensor model has good correspondence with the physical sensor.

The Benefits of Real-World Testing

By-wire capability gives us the ability to perform multiple real-world tests with a high degree of repeatability. Repeatability refers to controlling the velocity and trajectory so that the vehicle is in the same relative position at the same time from test to test. By-wire computer control has been demonstrated to be much more repeatable in on-track testing than human test drivers. This is crucial in avoiding the introduction of additional variables that might skew test results.

Using the by-wire vehicle, we can now perform correspondence testing, comparing the results from real-world tests with virtual tests using simulations created with input conditions matching those of the physical tests. Real world testing captures environmental effects on sensors often missed in a simulation environment. For example, specific material properties such as an object having a specular surface can pose a significant difference in results – one that only a real-world test may validate. As an end user this is where access to a by-wire research vehicle with a physical radar sensor is very helpful, as it’s the only mechanism currently available as a check on the validity of the simulation results. If you’re using simulation without performing this type of correspondence testing, how much confidence can you have in simulation?

Correlation of Testing Results

With all said, take caution when comparing real-world to virtual results, as correspondence between the simulation and the real-world cannot always be judged merely point by point or variable by variable. There may be a correlation in linear dimensions, velocities, and surface areas, but if these are not understood with regard to the purposes of the simulation, poor results may still occur. Even what appear to be straightforward relationships are complicated by an array of factors.

The purpose of simulation is to capture the relevant aspects of real phenomenon in simpler forms. These aspects are related to the information being sought and the real mechanisms behind the target phenomenon. Evaluating correspondence requires this complexity in the real world be recognized, understood, and accounted for in the simulation environment. This understanding increases confidence that the virtual sensor model is performing in a manner similar to that observed in the real-world testing. Only then should simulation be accepted as a proxy for real-world testing.

The Key to Accurate Validation

Once we have established sufficient confidence in our simulation tool and results, we can use it as a means of winnowing down an almost unlimited number of possible physical test scenarios to a more manageable number to be run on by-wire research vehicles. Since simulation always involves a simplification or approximation of the real world, physical testing using by-wire research vehicles must still be performed. Physical testing actually serves two purposes; real-world verification that our AV software is working as intended and a continuing check to ensure that all relevant aspects of the physical phenomenon being tested have not been missed, and that predicted simulation results remain plausible. A coordination of both simulation and real-world by-wire vehicle testing must be our guidance going forward. As Richard Feynman, the Nobel prize winning physicist, famously stated in a lecture on the scientific method, “If it disagrees with experiment, it’s wrong. In that simple statement is the key to science. It doesn’t make any difference how beautiful your guess is, it doesn’t matter how smart you are, or who made the guess, or what his name is… If it disagrees with experiment, it’s wrong. That’s all there is to it.”