Dataspeed’s Sensor Calibration Tool provides a set of functionality that can be used to set up and calibrate a suite of lidars and cameras. This convenient tool reduces the hassle of sensor setup and allows the user to spend more time on essential autonomous or data collection research. The Dataspeed Sensor Calibration Tool was selected by the University of Tartu’s Autonomous Driving Lab (under the Institute of Computer Science) because of Dataspeed’s reputation as one of the few well-known Drive-by-Wire functionality providers.

The manual TF tool and its possibilities seem very useful and helped us in the process.

Kertu Toompea

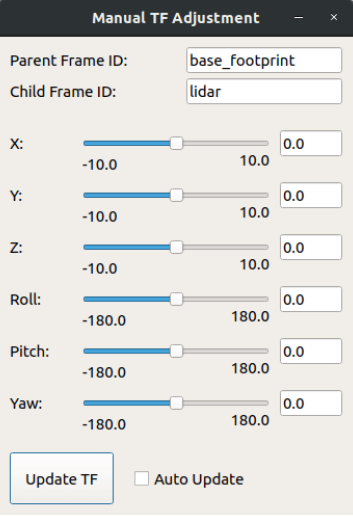

The manual TF frame adjustment tool has two main purposes:

- Allow the user to easily provide sufficient initial guesses to the automatic calibration modes, which do fine adjustment automatically using data from the sensors.

- Allow the user to easily fine-tune the transform between sensors that aren’t able to be calibrated using one of the automatic calibration modes.

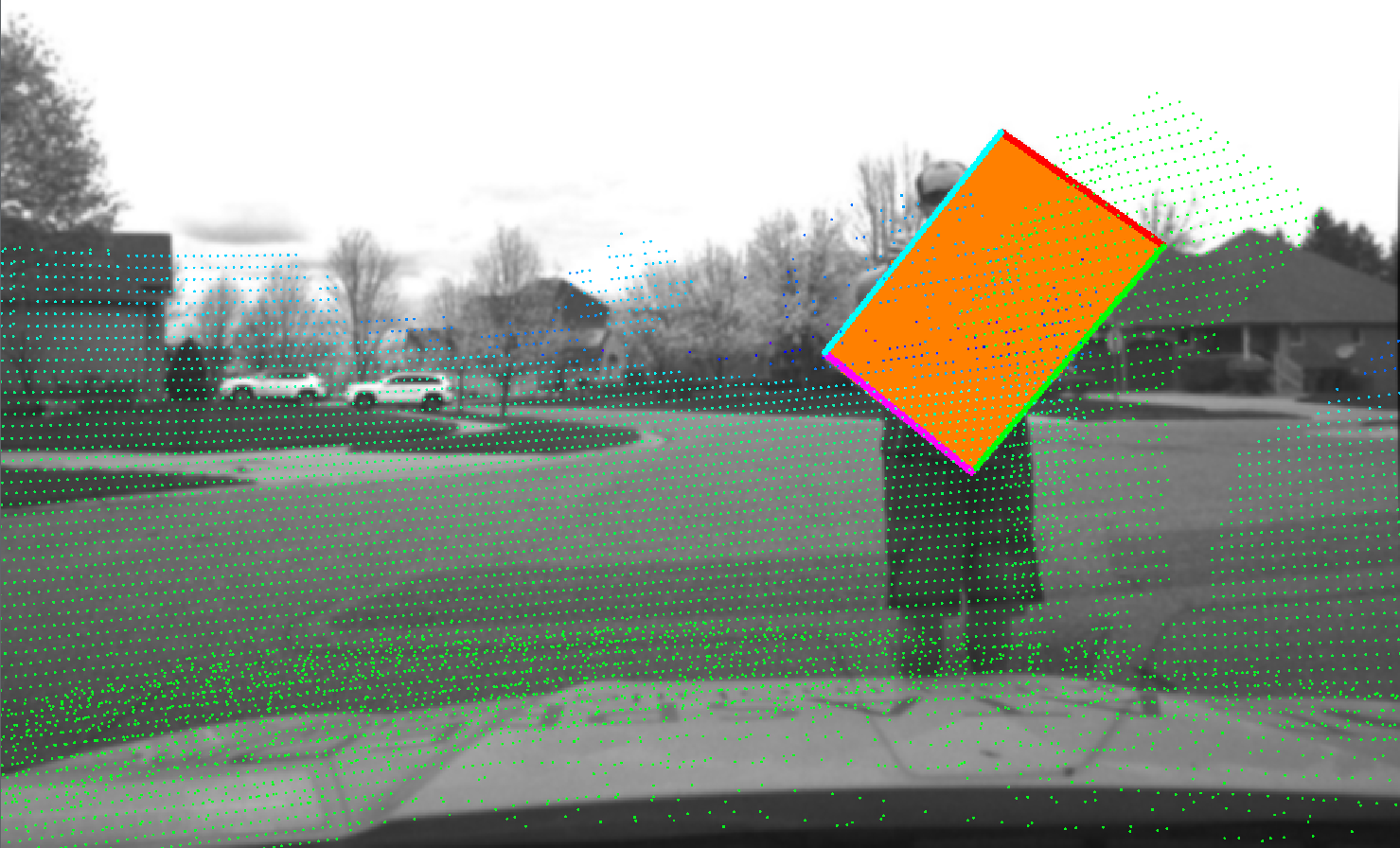

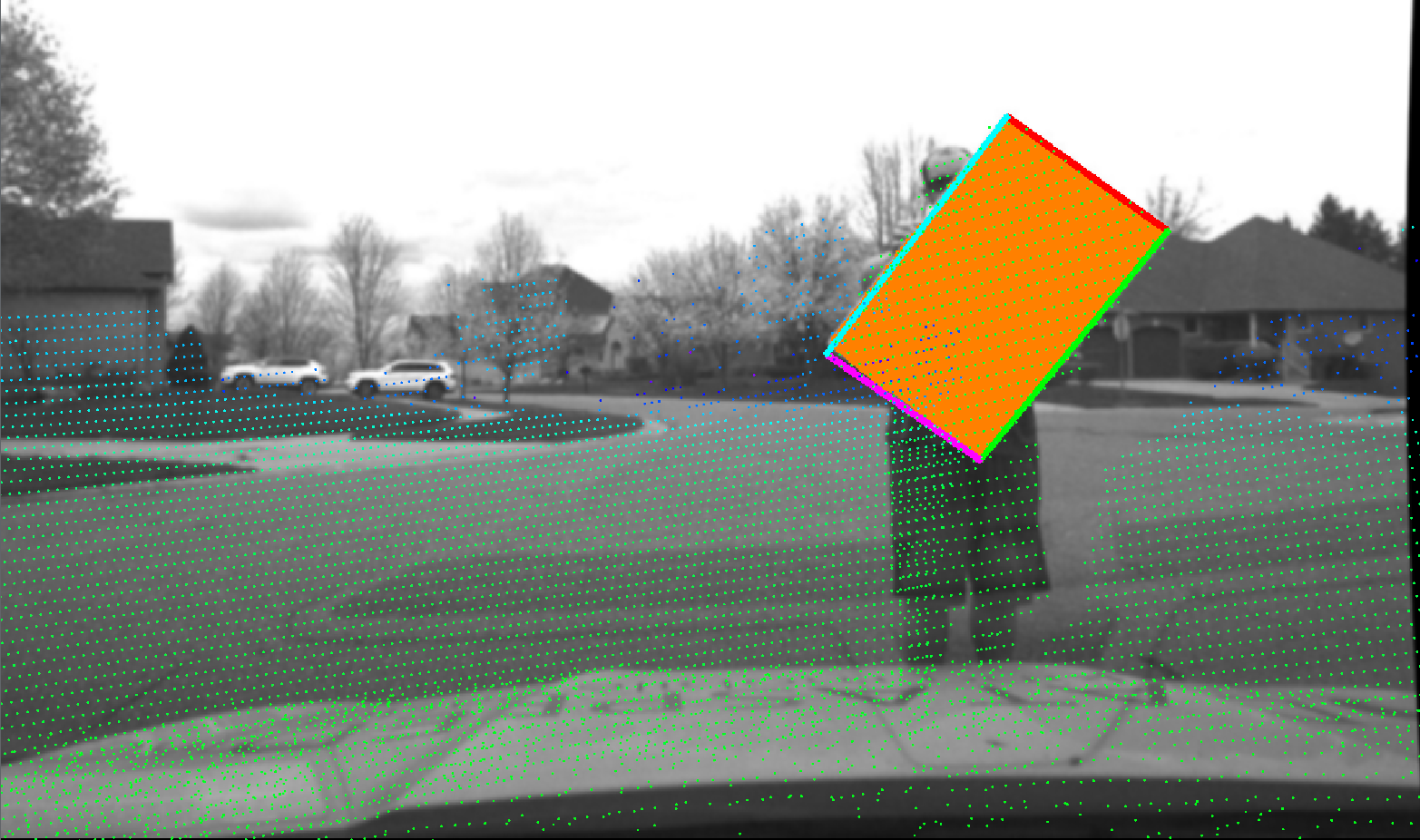

While the tool has four different calibration options, the University’s Autonomous Driving Lab was most interested in using the camera-lidar alignment calibration mode. A square or rectangular target board with a hue that is distinct from the rest of the camera scene is used as a tool for the calibration procedure. The algorithm isolates the target board using hue and saturation thresholding, matching user-specified settings. The value channel of the image is ignored to mitigate the effects of shadows cast on the target board. The Autonomous Driving Lab is currently utilizing this tool to calibrate some of their car’s lidar and camera sensors to streamline their process.

Dataspeed Sensor Calibration Modes

- Lidar Ground Plane Alignment

Lidar Ground Plane Alignment: This calibration mode inputs the point cloud from a 3D lidar sensor, detects the ground plane in the cloud, and then adjusts the roll angle, pitch angle, and z offset of the transform from vehicle frame to lidar frame such that the ground plane is level and positioned at z = 0 in vehicle frame.

- Lidar-Lidar Alignment

This calibration mode inputs point clouds from two 3D lidar sensors and computes the translation and orientation between the sensors’ coordinate frames. It does this by comparing distinguishing features in the overlapping point clouds.

- Camera-Lidar Alignment

This calibration mode inputs a camera image and a point cloud from a 3D lidar sensor and computes the translation and orientation between the sensors’ coordinate frames. It does this by detecting edges and corners of a rectangular target board in both the camera image and the lidar point cloud and comparing multiple samples.

- Camera-Camera Extrinsics Overlay

The camera validation GUI can be used to validate the extrinsics between multiple cameras with overlapping fields of view.

Share

Explore More

AI Test Vehicle for NIST

Project Overview Primary objective: The project goal for National Institute of Standard and Technology (NIST) is to provide measurement methods and metrics to study the interaction

Modular Camera Suite Integration for AI Leader

Project Overview Primary objective: A leader in AI reached out to Dataspeed with the intention of creating a small fleet of autonomous capable customer demonstration

McMaster’s Innovative Use of Dataspeed iPDS in EcoCAR EV Challenge

Dataspeed is a Supporter level sponsor of the EcoCAR EV Challenge and has donated an intelligent Power Distribution System (iPDS) to each team. The McMaster