David Agnew, VP of Business Development at Dataspeed, was recently published in Transportation-as-a-Service (TAAS) Magazine. His article, “Is it Safe?: The Reason, and the Challenge, for Autonomous Cars.” discusses possible safety measures that should be met before public mass deployment of self-driving cars. You can read the article below or in the TAAS Issue.

The Challenge

We are currently in a period where not just the state of our technology, but the rate of advancement of our technology (think Moore’s law) is throwing the doors wide open to new solutions for improving our personal, daily mobility needs. Sharing vehicles and improving access to mobility through cloud applications is improving traffic flow and safety through connectivity. But the biggest game changer (by far, according to the headlines) appears to be self-driving cars. The promise of lower mobility cost, better access to cities, increased personal productivity, and improved safety is driving significant investment and development in autonomous technologies. It’s this last promise, the promise of increased safety, which has emerged as the guiding “north star” to keep industry, academia, and regulators focused on autonomous cars after several years of intense research and development (R&D) with the job not yet finished.

But many are also starting to come to grips with the possibility that the job of developing autonomy for broad deployment may be very far from over. What’s interesting (and a bit ironic) is that the primary challenge preventing the broad deployment of autonomous vehicles (AV) and their life saving technology is this: They are not yet safe enough. This isn’t too different from developing new medical technology. You can’t save lives with a new miracle vaccine until you can prove it is safe enough. So, the full question is this: How safe is safe enough, to start dramatically increasing safety?

The Reason for the R&D

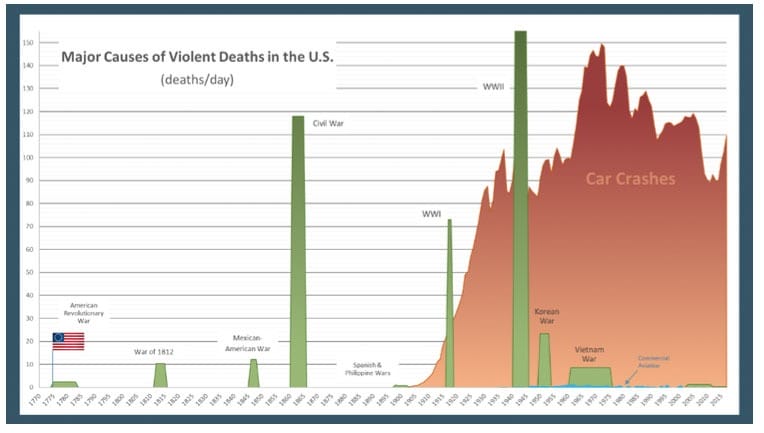

The message of safety as the reason for investing in the development of AV has been strongly communicated. When an unfortunate testing incident occurs such as the pedestrian fatality in Arizona, many people outside the industry echo the message: “it’s a terrible loss, but we shouldn’t stop testing because AV will eventually replace human drivers who cause 94% of all accidents”. This is a strong message that resonates with people and brings needed attention to the ongoing tragedy of roadway injuries and fatalities. The magnitude of society’s losses on our public roadways is staggering yet we have become desensitized to it. The national news will often report on a private plane crash on a given day, but because it is the same every day, they do not report the 100 people who unexpectedly and violently met their deaths on our roadways in the U.S.

Consider the chart below for a final perspective on the relative tragedy of roadway crashes. It displays the daily loss of U.S. lives during the country’s major conflicts. It also shows the loss of life from commercial aircraft (difficult to see, in blue and mostly between WWII and 2010). It then dramatically shows the loss of life from driving cars. The area under each of the curves represents total lives lost. The worst characteristic about the roadway fatalities trend is that it hasn’t ended, it’s actually increasing.

So yes, the reason to go after making AV work is a sound reason, and most are in agreement that we should do it as quickly as possible. National governments around the world are behind it, our best research institutions are behind it, and our automotive and tech industries are pursing it with vigor. So when will it be ready?

The Benchmark: “All Natural” Intelligence

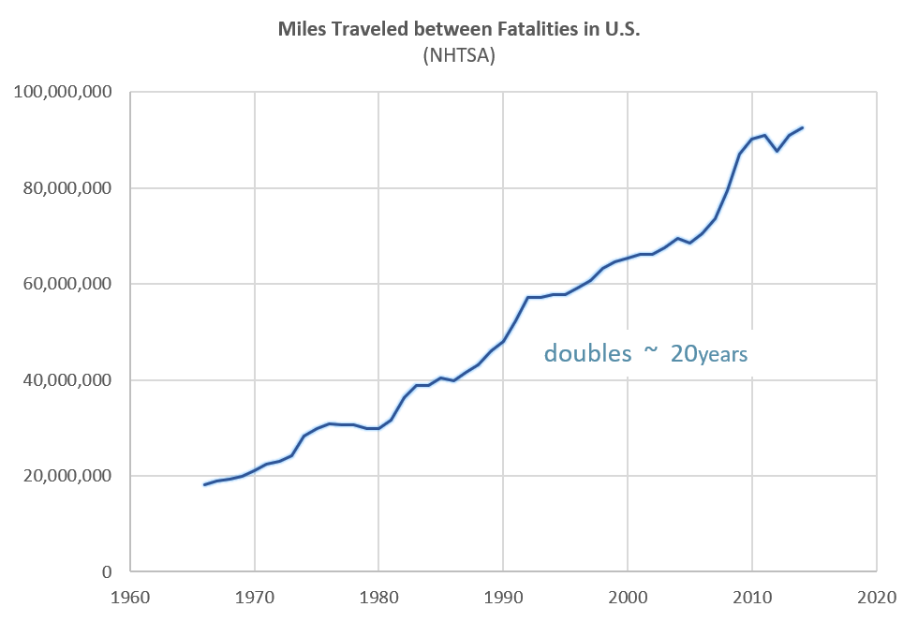

Here’s the story less told. We humans cause 94% of all the accidents, but that stat alone doesn’t tell the whole story. The value obtained from driving, the reason we risk going roller coaster speeds on open roadways with other drivers we don’t know, is the need for mobility. Getting from point A to point B, miles traveled, is the numerator in this performance equation. We human drivers are amazingly good at traveling a lot of miles before we make a “fatal error”. The overall average for the U.S. is around 100 million miles between fatality (see NHTSA ”2017 Fatal Motor Vehicle Crashes” DOT HS812603). This number is derived from taking the total annual miles traveled in the U.S., about 4 trillion, and dividing it by the total roadway fatalities, about 40,000.

As human drivers, our safety performance has improved by 5-fold in 50 years.

Yes, humans are the cause of most of our accidents, but we drive many miles between those accidents. Additionally, a lot of the crashes are caused by some concentrated groups of drivers such as the impaired, inexperienced, and distracted. By avoiding these behaviors, most people are driving at the rate of 1 billion miles or more between fatal errors. For simplicity, let’s stay with the 100 million miles/fatal error (100M m/fe) as a known benchmark of human driving performance.

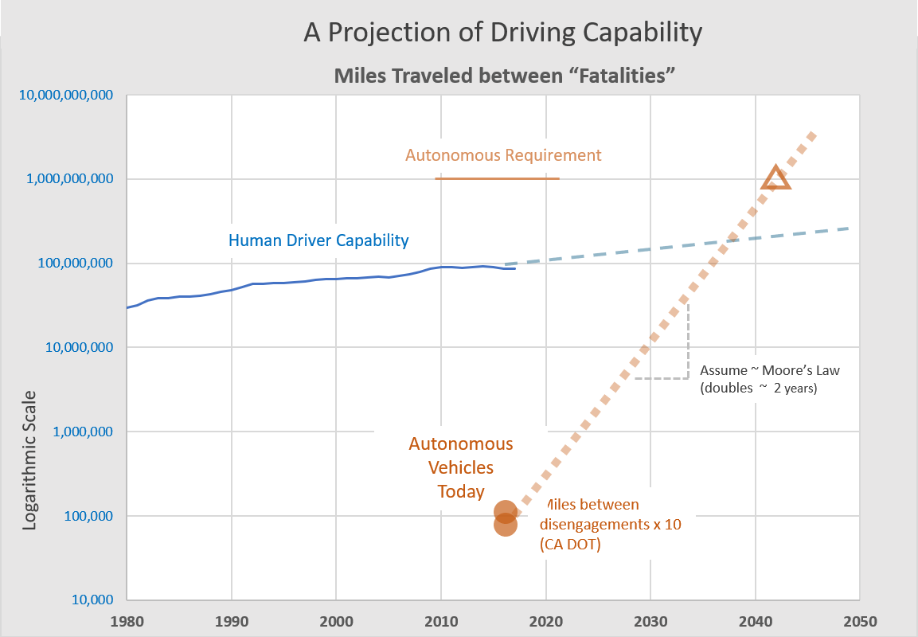

If we are going after AV technology to start saving the lives lost every day, every year on our roadways, then it is not enough to achieve the same benchmark as human drivers. If AI drivers can get us from point A to point B only with the same performance of average U.S. human driver of 100M m/fe, then with all else being the same, no lives are being saved. So, let’s set a reasonable goal of improved safety at 10x better than the human driver benchmark, which is 1 billion miles/fatal event. If this level of performance replaced all human drivers, then our annual fatalities would be reduced to 10% of current levels. This is a nice round, understandable target for discussing and measuring AV driving performance: 1B miles/fatal event. This is the point where we realize, as engineers, that this is not a challenge for the feint of heart.

To travel 1B miles requires about 40 million gallons of gas, so it will cost $120m just to pay the fuel bill for test vehicles. If your goal is to achieve 1B miles within six months (to support a design validation say), roughly 6,000 vehicles will be needed running around the clock, 7 days a week. This requirement is not a minor task to be addressed while chasing the greater challenge of making autonomous vehicles work. This is THE AV challenge: How to establish credible evidence that an AV can drive with less serious mistakes than most humans. The players in the AV field have been demonstrating amazing driving capabilities for years now, but maneuvering performance is not the issue. The race that is really underway is how to develop a “case” to convince the internal CEOs, the investors, the regulators, and the public, that an AV will meet this requirement of < 1 fatal event per 1B miles. Dataspeed’s unique position of providing vehicle by-wire platforms to the majority of the autonomous developers in this race provides some insight here. With approximately 500 vehicles in service to over 100 customers (the majority being in silicon valley), it is evident that there is a focus beyond just doing the driving tasks or maneuvers. In the end, an AV operating in the real world will be measured with scrutiny. If the prediction, or the “case” to justify full deployment was wrong, and it is not discovered until after a few years of production that a given AV in the field is a worse driver than most human drivers, the potential financial impact is too large to be dismissed by any company.

Research and development for vehicle safety does not achieve the headlines that going down the road with your hands out the sunroof does, but there is currently a furious and intense application of top engineering talent, processing power, and investment dollars being applied to this key challenge of making autonomous vehicles a reality. So, the next questions are: “Are we there yet?”, and “When will we be there?”.

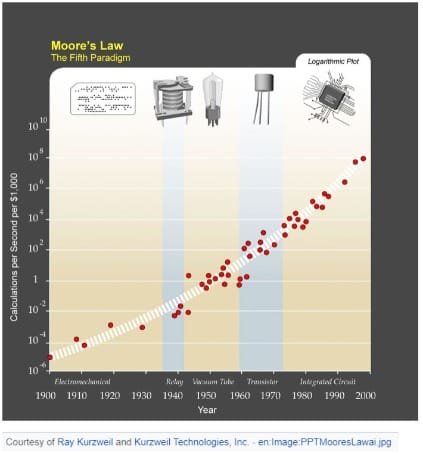

Ray Kurzweil and the Law of Accelerating Returns

In 2003, Martin Eberhard created a prediction of future battery performance based on the “Law of Accelerating Returns”, which is the idea that technology does not just keep increasing at a given rate, the rate of increase is itself constantly increasing (which means geometric growth, but we’ll come back to this). Eberhard used this prediction to convince prospective investors (i.e. Elon Musk) in the viability of his new company, Tesla. What’s key here, is that Eberhard knew battery performance in 2003 would not support a feasible business model for his electric car. But he also knew about “accelerating returns” and looking backwards he could see that battery performance was doubling roughly every 10 years (geometric growth). So he projected the same trend forward and made it part of his business plan. Today, there are not many companies who are not accounting for Moore’s Law, which roughly states the number of transistors fitting on a chip is doubling every 18-24 months. Moore’s Law, which has been validated with a tremendous amount of historical evidence, is a subset of the more broad “accelerating returns” idea, which was put forth by Ray Kurzweil in his 1995 book “The Age of Spiritual Machines”. Where Moore’s Law pertains only to integrated circuits, Kurzweil argues that there is an “accelerating returns” law of exponential growth that applies to many forms of technology.

Moore’s Law is the best known case of accelerating returns because of its early recognition by Moore and because its acceleration rate (doubles every 18-24 months) also roughly predicts progress of many other digital technologies (memory capacity, calculation speed, computing costs…). So why all the math and technology futurism? The purpose here is to try to answer that question coming from the back seat that keeps interrupting our otherwise excellent road trip to autonomy. The predictive exponential growth of technology, especially computational technology, gives us the opportunity to make prediction that is more than just a personal guess. Several years ago, just about every automotive company head was offering their prediction of when fully autonomous vehicles would be widely available. The year 2025 was a often reported value, maybe because it was far enough away, and it’s a nice round number. But there was not much justification for the predictions other than intuition. Now the value of intuition is not being questioned here, but I propose that it may be interesting to calculate an answer to our back seat question using the concept of accelerating returns. So here goes:

Let’s use the chart below to again look at “Miles Traveled between Fatalities In the U.S.. But this time, the vertical axis is logarithmic (each step is 10x the previous step) instead of linear (each step is the same value). Using this type of plot, exponential growth will show as a straight line over time, which will help us easily calculate our prediction. For reference, the shallow line shows NHTSA’s historical data for human drivers. Notice that the curve now appears to be a straight line (which suggests there may be a technology driven accelerating return at work) with the slop showing performance doubles roughly every ten years (similar to Eberhard’s battery performance). The solid line shows where we are today, 100 million m/fe. One step above this, at 1 billion miles, we can draw a horizontal line representing the requirement we established for AV driving. Now, what we need is a value to represent where AV’s are today. How many miles could one drive, on U.S. roadways, before a fatal event. Each individual company developing AV’s would consider this confidential, so it’s not published. However, the California DMV does require entities testing there to provide annual reports of active miles driven along with safety driver disengagements. These numbers of course are missing a lot of context, but we can provide an optimistic adjustment of 10x reported miles (in effect saying one in 10 of the disengagements could be a fatality either for the car occupants or other road users). This assumption can be argued, but I submit it as only a starting point to explain the method. Shown as orange circles on the horizontal line for 100,000 miles are two of the highest performers from CA’s website circa 2017 with the 10x factor applied (newer numbers are available, I’m leaving for others to investigate). The final step is to apply the assumption that the improvement of AV safety performance will follow an exponential growth path (straight line on our log plot) with an aggressive slope (highly computational driven technology) doubling every 2 years, similar to Moore’s law. This assumption is open for debate as well, but consider the tools and solutions being applied to solve the problem: massive simulation, deep neural networks, big data analytics…all being applied on a Google/Apple/Amazon(cloud) scale. It does seem to be a computational technology issue. With these few assumptions, all that is left is to extend our straight line for AV performance up to the 1billion miles line, and we have an answer for our back seat voice. Looks like somewhere around 2041.

Wrap Up

The intent of this discussion has not been to convince the reader that 2041 is the correct answer. It is clear the assumptions used (current AV performance, double every two years, etc.) lack fidelity. Even the stated problem is very broad. This is an estimate for when AV’s can drive 10x safer than humans everywhere in the U.S. A prediction of when an AV can park itself more safely than a human would be a different set of numbers. I do submit, however, that this approach of considering the rate of accelerating technology accelerating offers some value in measuring and estimating when AV’s may surpass human drivers and begin saving lives. Because saving lives is what many are really in this for, I also submit that the metric of “miles between fatal event” will be the one of importance, to be tracked with great interest. Taking this is as the metric and setting the correct target value (such as 1 billion miles) as the “finish line”, we can then make an attempt at answering “how long until we’re there?”.